Accepting that numbers can do strange, new things is one of the toughest parts of math:

- There are numbers between the numbers we count with? (Yes — decimals)

- There’s a number for nothing at all? (Sure — zero)

- The number line is two dimensional? (You bet — imaginary numbers)

Calculus is a beautiful subject, but challenges some long-held assumptions:

- Numbers don’t have to be perfectly accurate?

- Numbers aren’t just scaled-up versions of each other (i.e. 1 times some number)?

Today’s post introduces a new way to think about accuracy and infinitely small numbers. This is not a rigorous course on analysis — it’s my way of grappling with the ideas behind Calculus.

Counting Numbers vs. Measurement Numbers

Not every number is the same. We don’t often consider the difference between the “counting numbers” (1, 2, 3…) and the “measuring numbers” like 2.58, $\pi$, $\sqrt{2}$.

Our first math problems involve counting: we have 5 apples and remove 3, or buy 3 books at \$10 each. These numbers change in increments of 1, and everything is nice and simple.

We later learn about fractions and decimals, and things get weird:

- What’s the smallest fraction? (1/10? 1/100? 1/1000?)

- What’s the next decimal after 1.0? 1.1? 1.001?

It gets worse. Numbers like $\sqrt{2}$ and π go on forever, without a pattern. Numbers “in the real world” have all sorts of complexity not found in our nice, chunky counting numbers.

We’re hit with a realization: we have limited accuracy for quantities that are measured, not counted.

What do I mean? Find the circumference of a circle of radius 3. Oh, that’s easy; plug r=3 into circumference = 2 * pi * r and get 6*pi. Tada!

That’s cute, but you didn’t answer my question — what number is it?

You may pout, open your calculator and say it’s “18.8495…”. But that doesn’t answer my question either: What, exactly, is the circumference?

We don’t know! Pi continues forever and though we know a trillion digits, there’s infinitely more. Even if we knew what pi was, where would we write it down? We really don’t know the exact circumference of anything!

But hush hush — we’ve hidden this uncertainty behind a symbol, π. When you see π in an equation it means “Hey buddy, you know that number, the one related to circles? When it’s time to make a calculation, just use the closest approximation that works for you.”

Again, that’s what the symbol means — we don’t know the real number, so use your best guess. By the way, e and $\sqrt{2}$ have the same caveat.

40 digits of pi should be enough for anyone

We think uncertainty is chaos: how can you build a machine unless you know the exact sizes of its parts?

But as it turns out, the “closest approximation of pi that works for us” tends to be surprisingly small. Yes, we’ve computed pi to billions of digits but we only need about 40 for any practical application.

Why? Consider this:

- Atoms are about 1e-11 meters across

- The universe is about 90 billion light years (1e27 meters) wide

Dividing it out, it takes about 1e38 (1e27 / 1e-11) atoms to span the universe. So, around 40 digits of pi would be enough for an exact count of atoms needed to surround the universe. Were you planning on building something larger than the universe and precise to an atomic level? (If so, where would you put it?)

And that’s just 40 digits of precision; 80 digits covers us in case there’s a mini-universe inside each of our atoms, and 120 digits in case there’s another mini-universe inside of that one.

The point is our instruments have limited precision, and there’s a point where extra detail just doesn’t matter. Pi could become a sudoku puzzle after the 1000th digit and our machines would work just fine.

But I need exact numbers!

Accepting uncertainty is hard: what is math if not accurate and precise? I thought the same, but started noticing how often we’re tricked in the real world:

Our brains are fooled into thinking 24 images per second is the same as fluid motion.

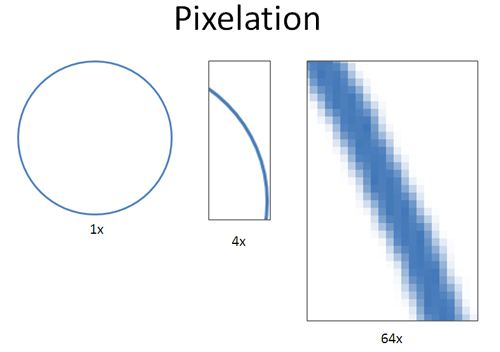

Every digital photo (and printed ones, too!) are made from tiny pixels. Pictures seem smooth image until you zoom in:

The big secret is that every digital photo is pixelated: we only call it pixelated when we happen to notice the pixels. Otherwise, when the squares are tiny enough we’re fooled into thinking we have a smooth picture. But it’s just smooth for human eyes.

This happens to mechanical devices also. At the atomic level, there limits on measurement certainty that restrict how well we can know a particle’s speed and location. Some modern theories suggest a quantized universe — we might be living on a grid!

Here’s the point: approximations are a part of Nature, yet everything works out. Why? We only need to be accurate within our scale. Uncertainty at the atomic level doesn’t matter when you’re dealing with human-sized objects.

Every number has a scale

The twist is realizing that even numbers have a scale. Just like humans can’t directly observe atoms, some numbers can’t directly interact with “infinitesimals” or infinitely small numbers (in the line of 1/2, 1/3… 1/infinity).

But infinitesimals and atoms aren’t zero. Put a single atom and on your bathroom scale, and the scale still reads nothing. Infinitesimals behave the same way: in our world of large numbers, 1 + infinitesimal looks just like 1 to us.

Now here’s the tricky part: A billion, trillion, quadrillion, kajillion infinitesimals is still undetectable! Yes, I know, in the real world if we keep piling atoms onto our scale, eventually it will register as some weight. But not so with infinitesimals. They’re on a different plane entirely — any finite amount of them will simply not be detectable. And last time I checked, we humans can only do things in finite amounts.

Let’s think about infinity for a minute, intuitively:

- Infinity “exists” but is not reachable by our standard math. No amount of addition or multiplication will take you there — we need an infinite amount of addition to make infinity (circular, right?). Similarly, no finite amount of division will create an infinitesimal.

- Infinity and infinitesimals require new rules of arithmetic, just like fractions and complex numbers changed the way we do math. We’ll get into this more later.

It’s strange to think about numbers that appear to be zero at our scale, but aren’t. There’s a difference between “true” zero and a measured zero. I don’t fully grasp infinitesimals, but I’m willing to explore them since they make Calculus easier to understand.

Just remember that negative numbers were considered “absurd” even in the 1700s, but imagine doing algebra without them.

Life Lessons

Math can often apply to the real world. In this case, it’s the realization that accuracy exists on different levels, and perfect accuracy isn’t needed. We only need 40 digits of pi for our engineering calculations!

When doing market research, would knowing 80% vs 83.45% really change your business decision? The former is 100x less precise and probably 10x easier to get, yet contains almost the same decision-making information.

In science, there’s an idea of significant figures, which help portray uncertainty in our measurements. We’re so used to contrived math problems (“Suzy is driving at 50mph for 3 hours”) that we forget the real world isn’t that clean. Information can be useful even if it’s not perfectly precise.

Math Lessons

Calculus was first developed using infinitesimals, which were abandoned for techniques with more “rigor”. Only in the 1960′s (not that long ago!) were the original methods shown to be justifiable, but it was too late — many calculus explanations are separate from the original insights.

Again, my goal is to understand the ideas behind Calculus, not simply rework the mechanics of its proofs. The first brain-bending ideas are that perfect accuracy isn’t necessary and that numbers can exist on different scales.

There’s a new type of number out there: the infinitesimal. In future posts we’ll see how to use them. Happy math.

Other Posts In This Series

- A Gentle Introduction To Learning Calculus

- Understanding Calculus With A Bank Account Metaphor

- Prehistoric Calculus: Discovering Pi

- A Calculus Analogy: Integrals as Multiplication

- Calculus: Building Intuition for the Derivative

- How To Understand Derivatives: The Product, Power & Chain Rules

- How To Understand Derivatives: The Quotient Rule, Exponents, and Logarithms

- An Intuitive Introduction To Limits

- Intuition for Taylor Series (DNA Analogy)

- Why Do We Need Limits and Infinitesimals?

- Learning Calculus: Overcoming Our Artificial Need for Precision

- A Friendly Chat About Whether 0.999... = 1

- Analogy: The Calculus Camera

- Abstraction Practice: Calculus Graphs

- Quick Insight: Easier Arithmetic With Calculus

- How to Add 1 through 100 using Calculus

- Integral of Sin(x): Geometric Intuition